Has agentic AI hit a ceiling?

It was supposed to be the natural evolution of generative AI. A tool that could “make decisions” with holistic and relevant contextual knowledge, plan and articulate independently, learn and adapt over time, pursue goals autonomously, and take action by converting its planning outputs into actionable execution. In other words, get things done with minimal human intervention.

Depending on what you read online, agentic AI is either a complete failure or just one iteration away from solving the world’s problems. In between these extremes is the truth of the matter. But before we get into that — what, exactly, is agentic AI?

Understanding The Basics of Agentic AI

In order to understand agentic AI, you first need to understand AI agents, large language models (LLMs), and retrieval augmented generation (RAG).

AI Agents

AI agents are implementations built around containerized LLMs with hard coded procedural logic and token-based memory retrieval approaches. They are autonomous, goal-oriented systems that use artificial intelligence to understand the environmental context of the problem statement, make decisions within that context, and take multi-step actions to pursue specific goals. They can access external data sources and applications as necessary and use RAG technology to solve diverse problems using conversation history summaries, unstructured inputs, and outside information sources such as databases, the internet, or other software.

AI Agents go well beyond single-interaction chatbots. Typically there are multiple agents, leveraging a single large language model in a “swarm,” and they share information amongst themselves, with one or more orchestrators. They send inputs and outputs to each other in a relatively unstructured manner, relying heavily on the LLM for both the logical understanding and contextual comprehension of a task. They connect to enterprise data and apps, extract the required information, perform advanced reasoning, planning and execution of tasks, and can work semi-autonomously to solve complex problems.

Large Language Models

Large language models are the engines that power AI. They are a specific type of generative model typically trained to understand and create human-like text by leveraging massive datasets, complex training cycles, and sophisticated neural network architectures. They are essentially word association engines that have been extensively trained on diverse datasets and can be fine-tuned through statistical pattern-recognition between input and output pairs.

Retrieval-Augmented Generation

Retrieval-augmented generation is a hybrid approach that combines the capabilities of generative AI models with a search-like retrieval mechanism to enhance the quality, accuracy, and relevance of generated content by enriching LLMs with relevant and specific contextual information. In this way it can amplify existing large language model capabilities through real-time data sourcing. It enables the LLM to access up-to-the minute information outside its known universe of training data — such as news, research, statistics, and internal databases — to answer user questions. The primary goal of retrieval-augmented generation is to strengthen the factual reliability of generative AI outputs.

How Agentic AI Differs from Traditional AI

Currently, when most people use the term “traditional AI”, they are actually referring to generative AI because that’s what we’ve become accustomed to saying over the last three years.

In reality, traditional AI analyzes historical data to identify patterns, derive rules from those patterns and then use them to perform specific tasks and make predictions. In contrast, generative AI autonomously creates new content that mimics or extends a pattern embedded within the training data. Traditional AI is trained to classify and predict based on predefined labels or ranges, whereas generative AI is proactive, adaptable to a wider range of prompts, and can generate novel outputs that maintain the characteristics of the training data.

Agentic AI takes automation and intelligent operations to a level well beyond the capabilities of generative AI. Autonomous agents are currently able to:

- Perceive and interpret inputs from human and non-human sources

- Interact with artificial intelligence via large language models

- Use RAG technology to access real-time information

- Gather and process data

- Perform contextually appropriate reasoning

- Plan and execute actions leveraging the right tools

Agentic AI has also been sold as a system that can:

- Act independently and autonomously

- Execute multi-step actions with minimal human oversight

- Proactively anticipate needs and adapt to changing conditions

- Automate complex workflows

- Make accurate decisions

- Learn and improve through feedback loops

But has it lived up to the hype?

Agentic AI Isn’t Ready to Solve Complex Problems in the Enterprise

While agentic AI is a significant advancement in artificial intelligence, it is not delivering on the promise of autonomy and the ability to solve complex problems, incorporate self-learning, and improve over time.

Platforms are limited in their data retrieval process because they rely on token-based searches and lack the ability to request and find the information needed to solve the problem. More importantly, agents are unable to structure their process based on the problem because they are working within a static architecture, predetermined logic, and immutable strategies. If agentic AI is ever going to do everything we were promised it would do, a dynamic approach to information retrieval and problem solving is needed.

It would appear that there is something inherently flawed in the agentic approach to AI.

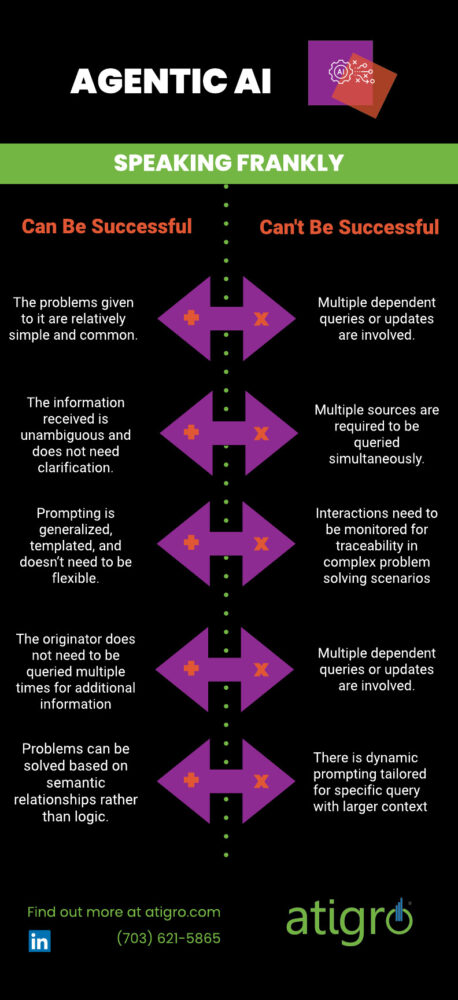

Agentic AI can be successful when:

- The problems given to it are relatively simple and common

- The information received is unambiguous and doesn’t need to be clarified

- Prompting is generalized, templated, and doesn’t need to be flexible

- The system or originator does not need to be queried multiple times for additional information

- Problems can be solved based on semantic relationships rather than logic

Unfortunately though, the instances where agentic AI cannot successfully solve a problem vastly outnumber the instances where it can; including but not limited to times when:

- Partial steps and states of a process needs to be edited and/or saved

- Multiple dependent queries or updates are involved

- Multiple sources (tables or data sources) are required to be queried simultaneously

- Real-time changes in business rules or data fields need to be made

- Business and security rules need to be enforced and validated

- Integration with APIs, SQL connectors, or other IT systems is required

- There is dynamic prompting tailored for specific query with larger context

- Interactions need to be monitored for traceability in complex problem solving scenarios

Agentic AI harnesses LLMs and expects them to solve the logical aspects of a problem while interpreting the language and context of the problem without any clear methodology for doing so. Because of this, agentic AI cannot be trusted to produce accurate, unambiguous, and consistent results.

Configuration and testing an agentic AI system requires a great deal of time, effort, and money. Once you’ve invested it, you want outputs that an SME with years of experience will not quibble with.

Realizing the Full Potential of AI

Agentic systems can’t break down vast, complicated goals into manageable modular sub-tasks. Nor can they carry out nuanced, adaptive decisions for specialized tasks in real-time based on their understanding of the current situation.

In order to solve the problems that really matter in business, like managing a global supply chain or optimizing workflows, something more than agentic AI is needed and it must provide:

- Configurability for every component of the system

- Proactive actions based on problem understanding, feedback, business rules, assumptions, and known strategies

- The ability to enforce security rules

- The ability to show, log, and learn from each process step and the inputs and outputs for each of those steps

- Make accurate decisions

- The ability to break complex problems down into modular and independently manageable pieces to ensure heterogeneous information is processed in a standard fashion

- A way of ensuring that mathematical, logical, analytical, operational, and transformation problems are solved without error and with contextual relevance

- Modular components that can be decoupled to reduce factual and reasoning errors due to lossy transformations

An AI system that could integrate with enterprise data to reliably solve complex problems and preserve accuracy while complying with business and security rules would completely change the way organizations interact with it. Instead of the business bending over backwards to accommodate the AI, the AI would seamlessly and effortlessly accommodate the business. It could solve the most intractable problems and turn long-sought-after goals into reality.

Let’s look at two theoretic use cases.

Construction

Construction companies often encounter challenges delivering services, supplies, and equipment to job sites in a way that is profitable to the business. An AI system with the capabilities described above could:

- Manage inventory, including procurement and monitoring stock levels and budgets

- Automate work order creation for services, rentals, or purchases and refine and update them as conditions change

- Manage equipment rentals by routing equipment and supplies, scheduling equipment setup, monitoring performance, and optimizing maintenance schedules

- Automate human resources cost management including hours tracking and approval, allocation of resources (full-time and overtime), and adjusting work schedules to accommodate last minute changes

Supply Chain

Last mile route optimization is critical to building a profitable supply chain. The last mile is the most customer-facing, individualized, and unpredictable segment, and consequently also the most expensive. One survey found that last mile services comprise 41% of overall costs and that the amount businesses absorb to stay competitive can erode profit by 26%. AI agents that were an effective, reliable team at scale could:

- Analyze historical data, current orders, weather patterns, seasonal trends, and even real-time social media sentiment (e.g., posts on X about shopping trends) to predict demand at a granular level

- Integrate real-time data feeds (e.g., weather APIs, traffic data from Google Maps, or customer order updates) into the AI system

- Recalculate routes on the fly as conditions change—e.g., if a driver is delayed or a customer requests a last-minute change

- Optimize the use of your delivery fleet by analyzing vehicle capacity, driver availability, fuel efficiency, and maintenance schedules in real time

Many of the conversations about using AI at the business level have centered on use cases that increase productivity and lower costs, with the potential for streamlining workflows and automating processes. In order to turn these goals into reality, current agentic AI systems must evolve so that they can operate autonomously and securely with a minimum of human intervention. Until then, we can only trust agentic AI if it is partnered with and monitored by a human being almost every step of the way.

About Atigro

Atigro is a proven ERP transformation firm that pairs its modular augmentation capabilities with AI-native frameworks. Atigro’s experience and expertise generate the rapid development and provisioning of new ERP functionality that meets dynamically changing business processes. You can learn more about implementing strategic AI capabilities to substantially improve business operations throughout your company by streamlining, automating, and optimizing workflows.